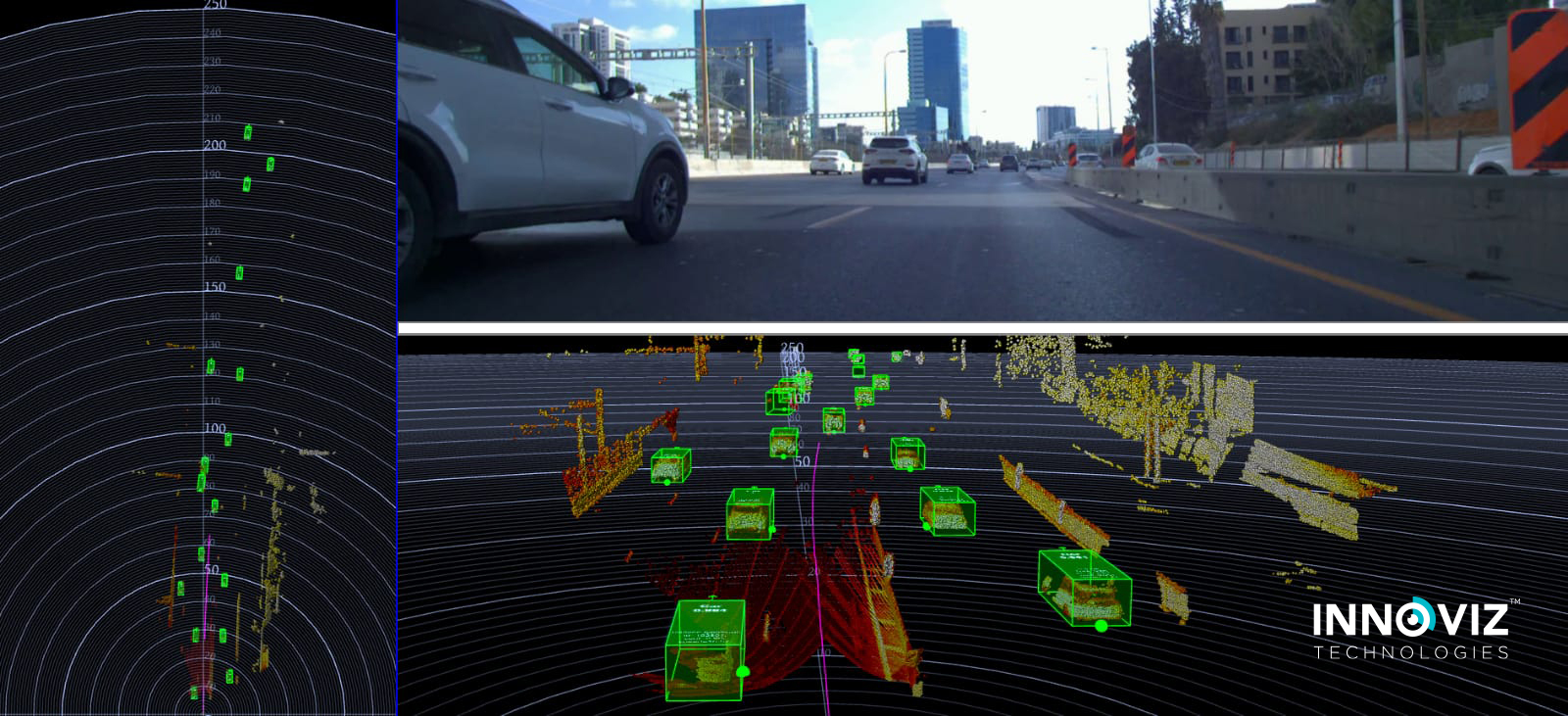

Object Detection, Classification, and Tracking

Detects objects with high precision. Boasts two independent detectors for identifying objects (i.e. cars, trucks, motorcycles, pedestrians, bicycles) as they appear through their shape and other attributes, and due to their movement. Delivers both high-quality object detection as well as more advanced object tracking (the ability to designate the same object as such in consecutive frames).